Lately a few blogs are using catchy headlines “R.I.P. Big Data” or “Big Data is Passé“. With due respect, I find them insane as they have not understood the terms correctly.

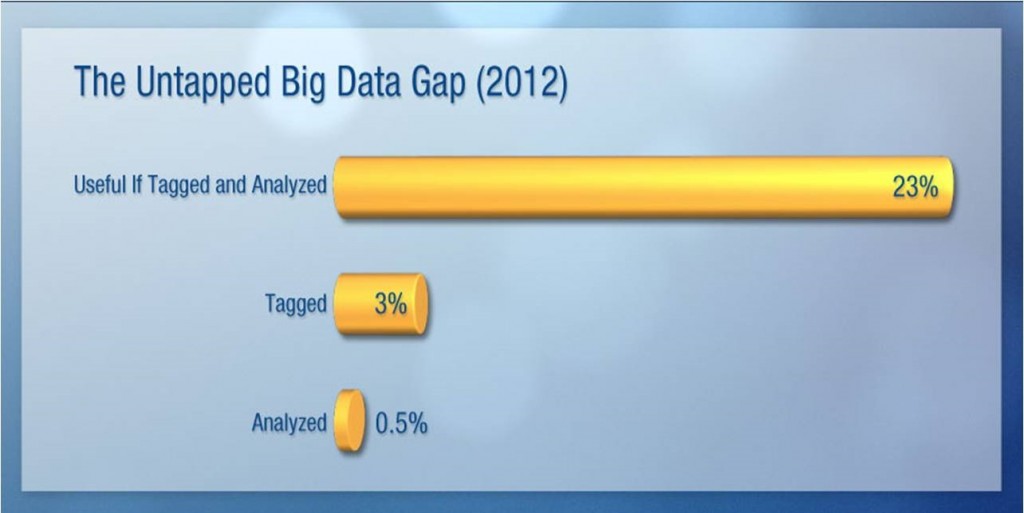

The Untapped Potential of Big Data

IDC says, “Call this the Big Data gap — information that is untapped, ready for enterprising digital explorers to extract the hidden value in the data. The bad news: This will take hard work and significant investment. The good news: As the digital universe expands, so does the amount of useful data within it.”

Source: IDC’s Digital Universe Study, sponsored by EMC (Dec 2012)

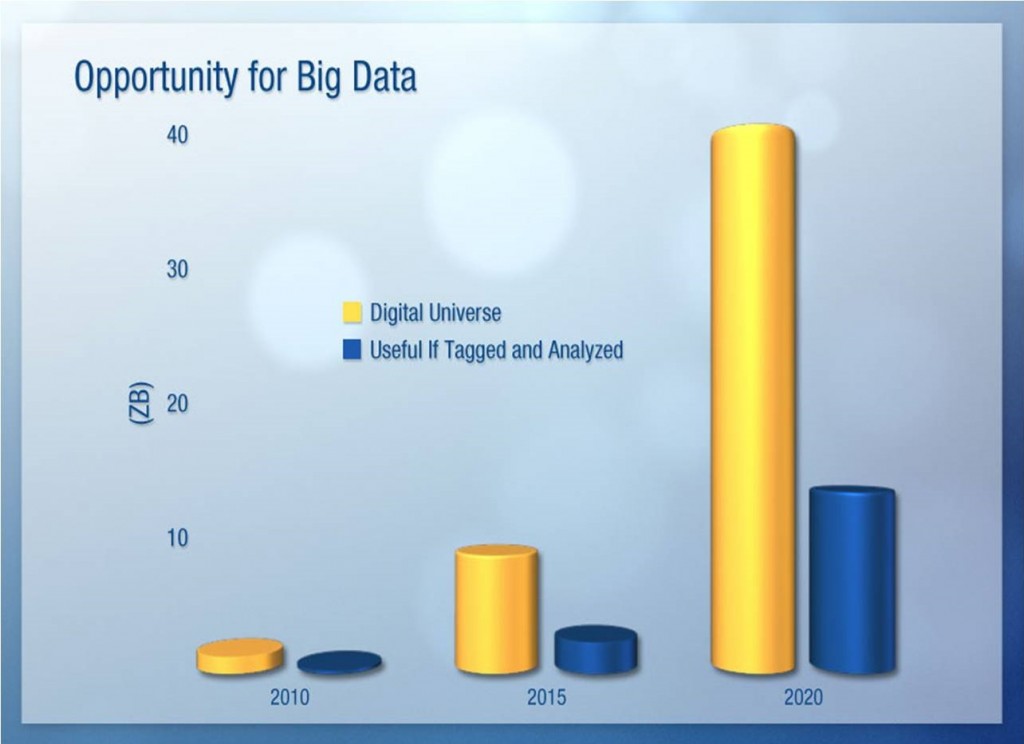

The study further explains that the digital universe itself, of course, comprises data — all kinds of data. However, the vast majority of new data being generated is unstructured. This means that more often than not, we know little about the data, unless it is somehow characterized or tagged — a practice that results in metadata. Metadata is one of the fastest-growing sub-segments of the digital universe (though metadata itself is a small part of the digital universe overall). We believe that by 2020, a third of the data in the digital universe (more than 13,000 exabytes) will have Big Data value, but only if it is tagged and analyzed (see “Opportunity for Big Data”).

Source: IDC’s Digital Universe Study, sponsored by EMC (Dec 2012)

Smart Data

The unstructured character and the ever-growing volume of data is indeed overwhelming. That’s why organizations across verticals are using all sorts of predictive, correlation and operational analyses to covert the ocean of data into meaningful, efficient and actionable insights. Simply put – Intelligence converts ‘Big Data’ into ‘Smart Data’.

Example: The Large Hadron Collider (LHC) experiments represent about 150 million sensors delivering data 40 million times per second. There are nearly 600 million collisions per second. After filtering and refraining from recording more than 99.999% of these streams, there are 100 collisions of interest per second.

Example: The Large Hadron Collider (LHC) experiments represent about 150 million sensors delivering data 40 million times per second. There are nearly 600 million collisions per second. After filtering and refraining from recording more than 99.999% of these streams, there are 100 collisions of interest per second.

Another example is the recent use of Big Data and crowd-sourcing by the FBI to hunt the Boston bomber. The use of intelligent algorithms to convert crowd-sourced data (images, tweets, articles, text, analytics etc) into relevant, worthwhile and compelling information is fast catching up to enhance public safety, governance and healthcare.

Another example is the recent use of Big Data and crowd-sourcing by the FBI to hunt the Boston bomber. The use of intelligent algorithms to convert crowd-sourced data (images, tweets, articles, text, analytics etc) into relevant, worthwhile and compelling information is fast catching up to enhance public safety, governance and healthcare.

Fast Data

By Fast Data, we refer to real-time information that is relevant and easily accessible, thereby reducing time to insight. The ultimate wish of every decision maker is to get self-service BI with streaming data and analyses. And this is already happening depending on the need and of course, the use of technology.

Jack Dorsey, co-founder of Twitter and financial-services upstart Square said, “When I release a new product or when I release a new ad campaign or if I’m a government, I release a new policy or law, I can get instant feedback on what people are thinking and how they interact with it and how they react to it. I can use that information to tune and to better my policies or my product or my company or my image or whatever I’m dealing with, all in real time.”

“On the battlefield, the time it takes to access intelligence can be a matter of life or death.  Harvesting, analyzing, and rapidly converting large data sets into actionable intelligence is currency for the military. Big Data is now a fixture on the battlefield and across the global security landscape; but managing the growing amount of available information has never been more relevant to how our country is fighting wars and planning for future threats”, says Chris Young, President of military and intelligence contractor ITT Exelis Geospatial Systems.

Harvesting, analyzing, and rapidly converting large data sets into actionable intelligence is currency for the military. Big Data is now a fixture on the battlefield and across the global security landscape; but managing the growing amount of available information has never been more relevant to how our country is fighting wars and planning for future threats”, says Chris Young, President of military and intelligence contractor ITT Exelis Geospatial Systems.

Conclusion

The data explosion is a reality – for now and the future. By 2020, IDC expects business data to increase 44 times to 35 zettabytes (1 zettabyte has 21 zeros in it). And it is only getting messier in terms of its volume, variety, velocity and complexity. The need is to move towards Smart Data and wherever possible, Fast Data.

Source: IDC

Source: IDC

The fun of disruptive technology is that it keeps spoiling us – while at the same time, challenging and scaring us with new possibilities and of course, new jargons 🙂

See my other blog to get a detailed understanding of Big Data.